Data Pipeline Excellence From Source to Insight

Our proven methodology transforms raw data into strategic business intelligence through a systematic, scalable pipeline approach.

End-to-End Data Pipeline Management

At Data:Hub Studio, we've perfected the art of data pipeline architecture. Our comprehensive approach ensures that your data flows seamlessly from collection to insight, with built-in quality checks, security measures, and optimization at every stage.

Whether you're dealing with real-time streaming data or massive batch processing jobs, our pipeline framework scales to meet your needs while maintaining data integrity and compliance.

Our pipelines are designed to grow with your business, adapting to new data sources and evolving requirements.

Intelligent orchestration that adapts to your data landscape

Core Pipeline Capabilities

Enterprise-grade data pipeline infrastructure designed for maximum performance

Embedded Engine

High-performance processing engine optimized for complex data transformations

Universal API

Connect to any data source with our extensive API framework

Real-Time Transformation

Process and transform data in real-time as it flows through the pipeline

Metadata-Driven

Intelligent pipeline configuration based on data characteristics

Rapid Development

Build and deploy pipelines 10x faster with our low-code platform

Enterprise Security

Bank-grade security with encryption, authentication, and audit logging

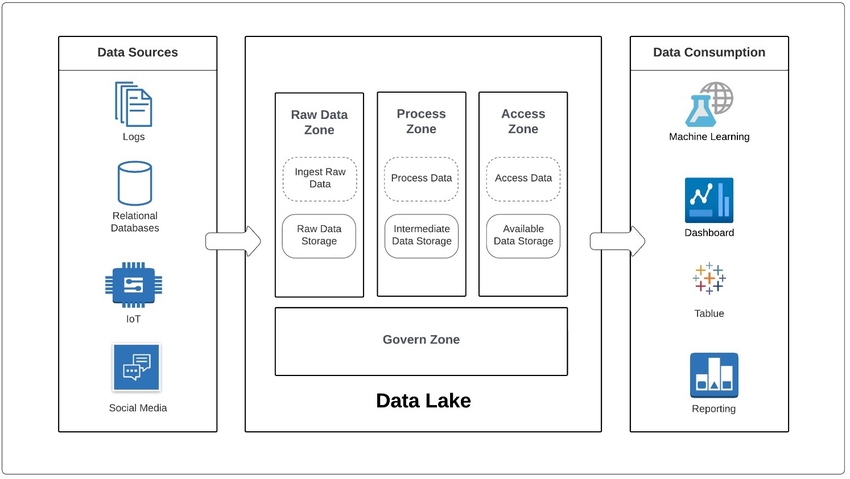

Multi-Zone Functional Architecture

Our pipeline architecture follows industry best practices with distinct zones for data processing

Landing Zone

Raw data ingestion and initial validation

Staging Zone

Data cleansing, standardization, and enrichment

Processing Zone

Complex transformations and business logic application

Comprehensive Data Integration

Connect, process, and deliver data from any source to any destination

Multi-Source Connectivity

- JSON/XML APIs

- SQL & NoSQL Databases

- Cloud Platforms

- Files & Documents

Processing Capabilities

- Real-time streaming

- Batch processing

- Complex event processing

- Data quality validation

Output Destinations

- Optimized data warehouses

- Multiple format outputs

- Business dashboards

- Real-time monitoring

Intelligent Data Management

Data Quality Management

Automated data quality checks, validation rules, and anomaly detection ensure data integrity at every stage

Metadata Orchestration

Comprehensive metadata management providing full data lineage and impact analysis capabilities

Performance Optimization

Continuous monitoring and optimization ensure your pipelines run at peak efficiency

Pipeline Performance Metrics

Industry-leading performance across all pipeline operations

vs traditional ETL

Guaranteed SLA

Low-code platform

Cloud-native design

Pipeline Use Cases

Proven solutions for complex data challenges

Real-Time Analytics

Stream processing for instant insights from IoT devices, social media, and transaction systems

Data Lake Management

Organize and govern massive data volumes with automated cataloging and lifecycle management

API Orchestration

Connect and orchestrate multiple APIs to create unified data products and services

Ready to Build Your Data Pipeline?

Let our experts design and implement a custom pipeline solution for your organization